Great computer vision applications require quality visual data, and a lot of it. But what happens when you don’t have enough data?

Startups face the biggest data challenge in applying AI solutions. The data either doesn’t exist or there isn’t enough of it. Most growth- and enterprise-stage companies have a fair amount of data to work with but even then, sometimes the data doesn’t have all of the attributes of a good data set.

What makes a good data set?

To be used in computer vision, a data set must, at a minimum, meet four characteristics: variance, quality, quantity, and density. Let’s say you wanted to train a system that navigates traffic for autonomous vehicles. For this project, a good data set would have:

1. Variance - Images should include many different kinds of objects of interest, such as motorcycles, sedans, minivans, SUVs, and trucks. There are different makes and models of each vehicle. The data set could include different roadways, such as highways, city streets, or rural roads. The goal is to mimic the variance of real life in the data.

2. Quality - High-resolution images make it easier to annotate with quality, which will result in better training for your machine learning models. Quality also can mean images without human-generated obscurities in the real world, such as object truncation due to imperfect camera angles.

3. Quantity - You will want plenty of data to work with. The more images you have, the better. You can never have too much data when it comes to training machine learning models.

4. Density - There are plenty of target objects in the images, to reflect real-world conditions. If you have images with only one or two cars each, you may need more density in your images.

The data acquisition challenge

We addressed this topic in a recent webinar, Building Your Next Machine Learning Data Set. Generally, there are three ways to acquire data. You can use open data, create your own, or hire a third party to create it for you. There are pros and cons to each approach, and it’s worth thoughtful consideration before making a decision.

Let’s take a closer look at your options:

1. Use open data. These are easily accessible and available to use, typically online. Individuals, businesses, governments, and organizations created them. Some are free, and others require the purchase of a license to use the data. Open data is sometimes called public or open source but it generally cannot be altered in its published form. It is available in various formats (e.g., CSV, JSON, BigQuery).

Some open data sets are annotated, or pre-labeled, for specific use cases that may be different from yours. For example, if the labeling does not meet your high standards, that could negatively impact your model or require you to spend more resources to validate the annotations than you would have by procuring the right-fit data set in the first place.

PROS: Convenient | Low-to-no cost

CONS: Data features and data quality may not be to your specifications | May require validation and rework | Good for testing the concept for your model but not sufficient for deploying and maintaining a machine learning model

Here are a few open data sources that are well maintained and feature a variety of image data for computer vision. Some include pre-labeled data:

- Awesome Public Datasets - Created by GitHub users, this list features data related to agriculture, government, the sciences, transportation, and sports.

- AWS Registry of Open Data - This is Amazon Web Services’ source for open data.

- Google Dataset Search - This is Google’s available datasets. You also can add your own.

- IEEE DataPort - This site allows you to store, search, access and manage standard or open access datasets across a broad scope of topics.

- Microsoft Research Open Data - This group of datasets includes visual data for computer vision in healthcare, sciences, and education.

- Kaggle Datasets - This site offers 19,000 public datasets and 200,000 public notebooks.

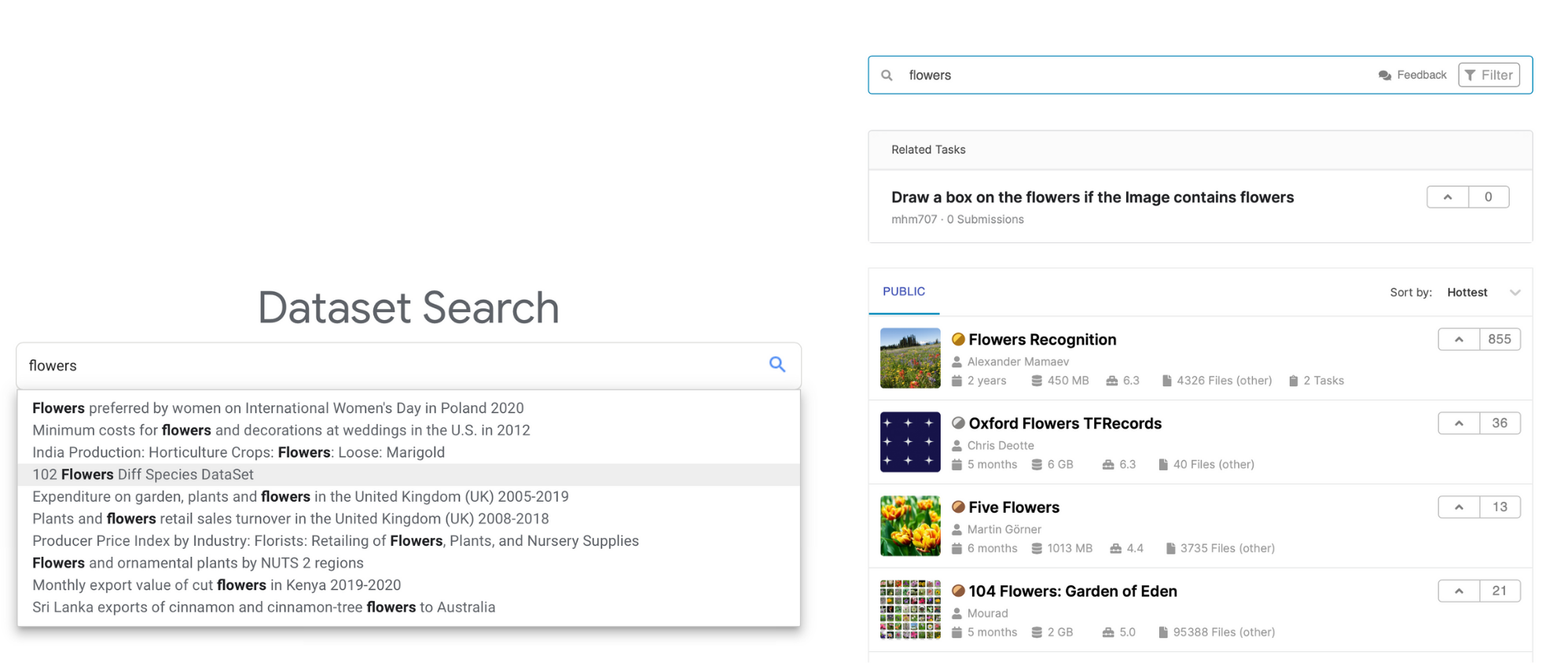

This Google Dataset Search (left) and Kaggle search for “flowers” shows how your search results can vary by source.

2. Create a custom data set. You can build your own data set using your own resources or services you hire. You can collect data manually, using software tools, such as web-scraping tools. You also can gather data using devices, such as cameras or sensors (e.g., LiDar). You may use a third party for aspects of that process, such as the building out of IoT (Internet of things) devices, drones, or satellites. You can crowdsource some of these tasks to gather ground truth, or to establish real-world conditions.

Before you begin building your own datasets, you will have important decisions to make about your image annotation workforce and your data annotation tool.

PROS: You can build to your rules and feature specifications | Resulting intellectual property (IP) may be valuable

CONS: Takes time and resources to gather | You will shoulder the project management and HR responsibilities

3. Partner with a third party to create data sets. Here, you work with an organization or vendor who does the data gathering for you. This may include manual data gathering by people or automated data collection, using data-scraping algorithms.

This is a good choice when you need a lot of data but do not have an internal resource to do the work. It’s an especially helpful option when you want to leverage a vendor’s expertise across use cases to identify the best ways to collect the data.

One such provider is Keymakr, a company that provides custom raw data collection and annotation services. Another vendor, Q Analysts, provides data collection, ingestion, and annotation services.

PROS: You can build to your rules and feature specifications | Resulting intellectual property (IP) may be valuable | May leverage third-party domain knowledge of your use case

CONS: Can be expensive

However you acquire the images you use for your computer vision project, you will need to go through the process of collecting the data in tiers so you can annotate the data and use it to run your model to ensure it is a good fit for the algorithm you are creating. Once you see how it works, you can adjust it to mitigate any implicit or explicit bias, then collect and run more data.

These cycles of collecting, annotating, and using small groups of data will help you understand what works best in terms of your model, time, and cost. The goal is to use the right amount of data required to generate the best results for your model. In a future article, we take a closer look at this process and share best practices for creating a custom data set.

Computer Vision Image Annotation AI & Machine Learning Data Acquisition

![Building Your Next Machine Learning Data Set [Webinar]](https://no-cache.hubspot.com/cta/default/351374/c90e3835-662f-4989-b6da-9a8aaf4bd0d1.png)