Lungs are tough to study. The ribs, heart, and diaphragm get in the way.

In normal times, it’s not an issue. Radiologists can quickly and efficiently work their way around images to spot cases of pneumonia and other ailments.

But these aren’t normal times. Understanding what COVID-19 does to lungs is critical in developing treatment strategies. It’s a novel virus and early research suggests the damage it wreaks in lungs is novel as well. Chest X-rays are a common part of the intake process for people with respiratory ailments so hospitals need to quickly, confidently identify COVID-19 cases to manage critical resources.

Alberto Rizzoli wanted to apply his company’s machine learning expertise to lung images to help researchers study COVID-19 and, eventually, help clinicians spot and triage more serious cases earlier. The company, V7 Labs, is working with us at CloudFactory to make that happen. V7 also wanted to apply machine learning in a way that will help researchers understand other lung conditions going forward.

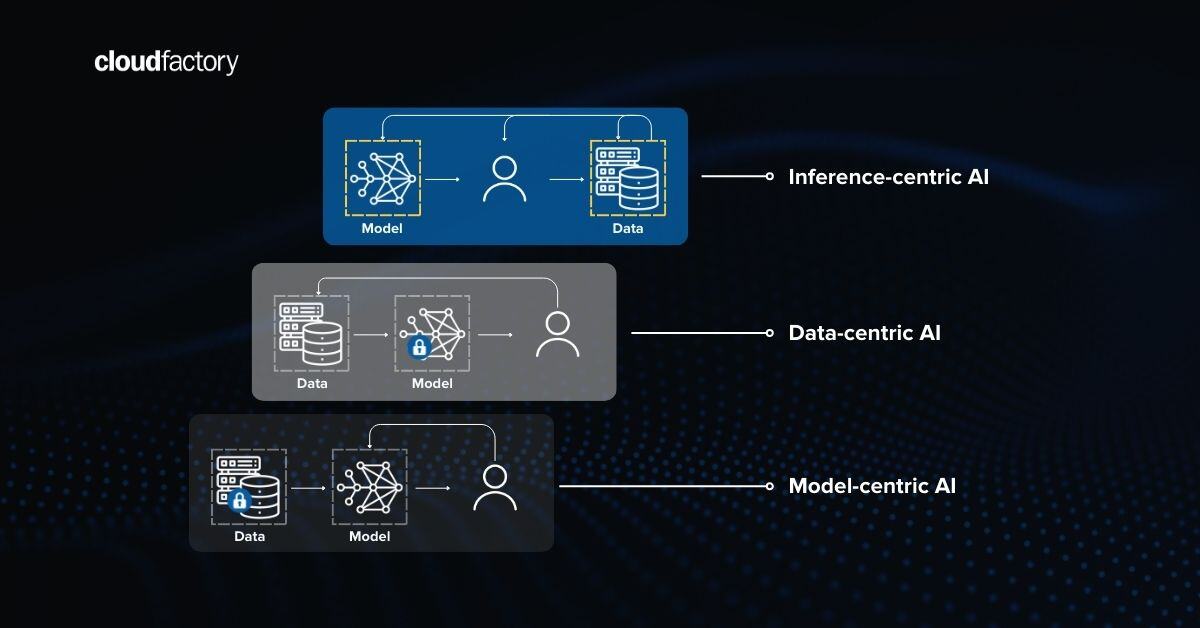

“There isn't a labeled dataset of this level of scale and diversity out there,’’ says Rizzoli, the co-founder of V7. “What we've seen is companies have rushed to the media to publish results on models that use the whole picture of an X-ray and perform classification.” These studies apply machine learning to identify X-rays that are of COVID-19 patients. But the differences between images with COVID-19 pneumonia and those without can be spotted “by the naked eye, as they come from different sources, machines, or patient age groups’’ Rizzoli explains.

V7 wanted to use machine learning to go deeper, exploring elements of the lung that could provide additional clues about COVID-19’s clinical path and damage, along with creating a dataset that can be used for other lung research and training machine learning models for clinical studies. V7 collected 6,000 lung images from multiple open-source datasets - a mix that includes patients with and without COVID-19. They helped train CloudFactory’s managed workforce in Nepal to use V7’s Darwin annotation tool to combine AI-driven auto-labeling and precise human-led image annotation to optimize the data for machine learning.

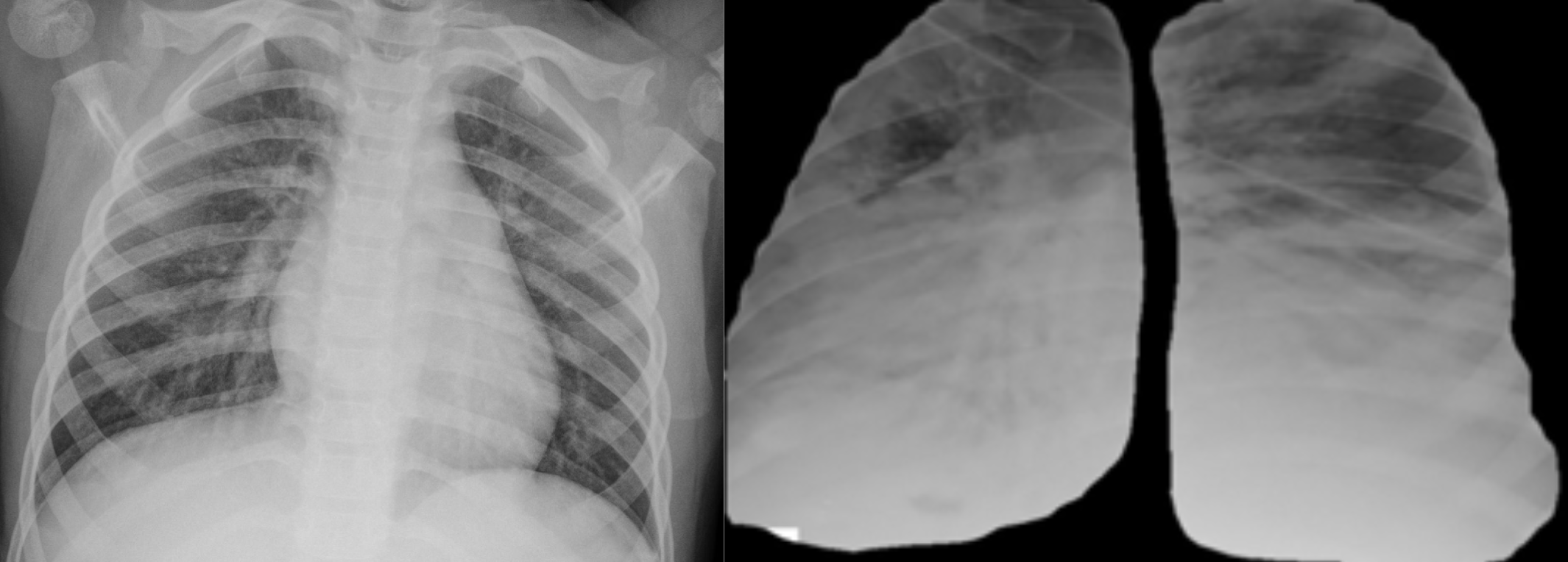

“The goal is to find visual characteristics that would otherwise not be picked up,’’ Rizzoli says. In addition, V7 wants to provide a dataset in which other types of characteristics can’t be seen -- so they can’t bias the object detection models. For instance, whole chest X-rays show the rib cage and shoulder bones which provide telltale clues about the age of the patient.

Why keep the patient’s age from a researcher? The models could end up being trained with a bias to “notice and reward itself by recognizing old people because they're almost certainly sick, so right now what we're able to do is isolate the lungs in an unbiased way.”

The CloudFactory team helped eliminate that bias by segmenting out the portions of the chest X-ray that obscure -- ribs, and hearts, and sometimes the diaphragm. “We want images of just lung tissue.’’

Left: Unlabeled chest X-ray with heart and other organs present. Right: Masked-normalized lungs, achievable thanks to the segmentation annotations. These can greatly improve the performance of classification.

Choosing to Work with CloudFactory

Alberto reached out to CloudFactory because we’ve known each other for several years and worked together on a project involving microscopy annotations. Rizzoli says he likes working with CloudFactory because we take more of a partnership approach.

“CloudFactory is interested in maintaining a relationship. You are interested in more than just taking the job and doing it. There is a larger interest in the whole project and it creates this feeling that you are an extended part of our team. That’s more attractive than an outsourcing company that is more transactional.’’ CloudFactory’s managed workforce is also well-trained in annotating visual data so onboarding the annotation team to the project was easier.

Next Steps for the Project

As of today, the annotated data is available on GitHub with additional support documents. The open, annotated data will be available to any researcher free of charge. “The goal is to have people stop using plain lung images without annotations. Now that this dataset is out there, there is no excuse,” Rizzoli says, adding that it will be offered in a way that can be directly imported into PyTorch or TensorFlow for their own analysis.

V7’s approach is unique. Rizzoli says the models have successfully identified COVID-19 and other lung ailments during preliminary tests but their efficacy will need to be confirmed through official clinical tests.

Meanwhile, V7 will be continuing their research. Once they receive permission, V7 wants to match lung images to clinical notes on patients so that their data scientists can train the algorithm to detect levels of severity of COVID-19 in patients whose initial lung X-rays didn’t suggest a bad case. “We've been given a thumbs up for testing the resulting algorithm on the data from San Matteo hospital in Pavia, Italy,’’ Rizzoli notes.

This could help address one of the most confounding aspects of the side effects of the virus -- seemingly mild cases can turn deadly very quickly. If the models can help clinicians be on the lookout for anomalies that suggest a severe case, it could potentially save lives. “Right now, it is hard to access the severity of COVID-19 from medical imaging, Rizzoli explains. “Hospitals have patients that they would not expect going into the ICU. If we can help them understand, ‘hey, this patient looks very similar to these other five patients who got very ill’, they can reconsider how to treat the patient.’’

It also might help when patients present with COVID-19 symptoms but don’t test positive, and help in areas of the world that don’t have enough radiologists (allowing clinic doctors, for instance, to understand what they are seeing on a chest X-ray).

Finally, Rizzoli wants this dataset and the machine learning his company is doing to help the research community discover more about other lung diseases -- bacterial and viral pneumonia, pulmonary fibrosis, and more.

We look forward to collaborating with V7 and the healthcare industry on future data annotation discoveries.

Data Partners Image Annotation Healthcare AI & Machine Learning