This post is written by Genevieve Patterson. She is a PhD student in 'Computer Vision' at Brown University, Providence, Rhode Island. This post originally appeared on Genevieve’s CS blog and has been republished with permission.

The core of computer vision is concentrated on learning how to automatically recognise things that humans are already awesome at recognising (dogs, cats, sail boats, handwriting, etc.). Humans can recognise these things in approximately 0.3 seconds -- you can recognize a Jack Russell before you can even think the words ‘super cute dog'. Humans are great computers.

A growing cohort of vision researchers are trying to make vision algorithms do things that the average human can’t do. These algorithms can identify insect and bird species, recognise fashionable clothing, even detect in which Parisian arrondissement a photograph was taken.

You may be asking, how is this kind of expert-level recognition possible if understanding general categories of objects is a challenging problem? One solution is to use humans as part of the algorithm.

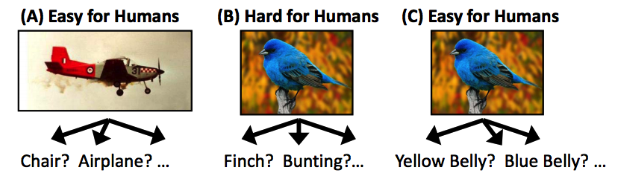

Few non-ornithologists can tell a Finch from a Bunting, and non-fashionistas probably can’t tell Peplum from Ruffles. But pretty much anyone can identify a bird’s head or wing, and even those of us who dress in the dark can divide flat shoes from heels. Human-in-the-loop vision techniques successfully exploit the knowledge of average humans to build classifiers that can identify expert-level objects.

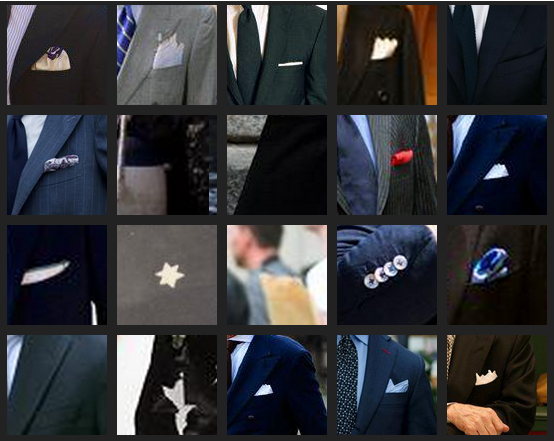

Finding all the Pocket Squares

Example detections from my pocket square classifier. Note these aren’t perfect, but this classifier was created by starting with only 5 training examples and uses a simple linear SVM.

My research involves training hundreds of classifiers to identify tricky visual details. I train very specific classifiers to understand details of clothing, animals, and outdoor or indoor scenes. I’m trying to understand complicated events in pictures by first identifying the tiny parts.

Making classifiers for these tiny parts is difficult because they are often rare and hard for non-expert humans to identify. I’m overcoming this challenge by using a crowd-in-the-loop adaptation of Active Learning.

Active learning is a classic approach for creating classifiers when little or no training data is available. This approach has been successful for face and pedestrian detection. The basic pipeline goes like this: First a very simple classifier is trained with just a few positive examples. Next, this simple classifier is used to detect faces or pedestrians in a large set of images. A human looks at the results and selects the correct and incorrect detections. The algorithm uses the human’s corrections to train a new classifier. This is repeated a dozen or so times. At the end the classifier has high accuracy, but very few training examples were actually used.

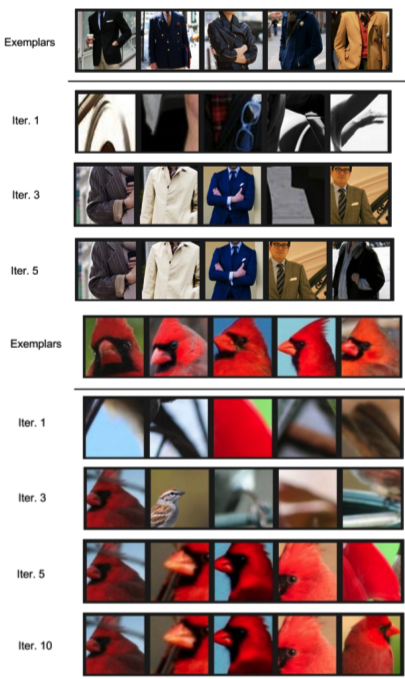

Active learning that employs the crowd to answer the active queries can scale this traditional approach to a large number of fine-grained classifiers. In the figure below, the top row shows 5 examples of something that I want to detect (jackets and the head of a cardinal). Each numbered row shows the output of my classifiers at different iterations of the training process. At each step the classifier is getting better because people are correcting its mistakes. At the end both classifiers get their top 5 detections correct, even though they only started with 5 positive examples (usually these types of classifiers get hundreds of positive examples).

Classifiers made by the crowd

Human-in-the-loop computer vision can help solve problems that are hard for even humans to do themselves. When humans and computers work together we can do incredible things.