Will 2022 be the year when we get into our vehicles, buckle up, lean back, and trust artificial intelligence to take us to the supermarket in our fully self-driving cars?

The answer is…sort of.

For the time being, this experience is only a reality for specific vehicles, in some cities, on selected roads, at certain speeds, while following strict regulations.

The truth is, there are zero fully autonomous cars available for purchase anywhere in the world. That may sound like a letdown, but enormous progress is being made and it’s fair to say that it’s only a matter of time before fully autonomous cars, trucks, and robotaxis transform our society.

The proof is already on American roadways. For example, Waymo, Google’s autonomous car division, is currently operating its driverless ride-hailing service, Waymo One, for the general public in Phoenix.

Likewise, Aurora deploys its autonomous electric trucks (always with two safety drivers) to carry loads for Barcel, the Takis spicy chips and snacks manufacturer, along a route in Texas between its Dallas, Palmer, and Houston terminals. The company also carries freight for FedEx between Dallas and Houston as part of a pilot program.

These types of advances show the promise of a future where autonomous driving is just a normal part of life. But in the near term, most developments will focus on incorporating more and more technologies to keep drivers alert and accident-free.

Advanced Driving Systems and Advanced Driving Assistance Systems: What’s the Difference?

All this talk of autonomous driving is the perfect occasion to distinguish the difference between automated driving and autonomous driving. Like drone automation and drone autonomy, automated driving and autonomous driving have become interchangeable. They aren’t though; the terms are different and unique, especially when deciding where and when to integrate AI technologies like computer vision.

Here’s the difference. Fully autonomous vehicles use Advanced Driving Systems (ADS) for all driving in all circumstances. The human occupants are just passengers; they never need to operate the vehicle. Close your eyes and think about putting your feet on your car’s dashboard while reading a book. There’s no steering wheel, yet your vehicle navigates safely to your destination. This is an example of a fully autonomous vehicle with an integrated ADS.

Vehicles with Advanced Driver Assistance Systems (ADAS) use multiple sensors and alert systems to help drivers prevent car accidents. Since most accidents result from human error, ADAS acts as a driver’s chaperone, alerting them of possible dangers. Close your eyes again and imagine yourself ready to change lanes on the freeway, but a blinking orange light on your side-view mirror and a loud beep alert you to a car coming alongside. This is an example of an automated vehicle with an integrated ADAS.

While ADAS can make driving safer and easier for drivers, they do not incorporate AI in their technologies, whereas ADS does.

Where Do ADS and ADAS Fall in the Levels of Driving Automation?

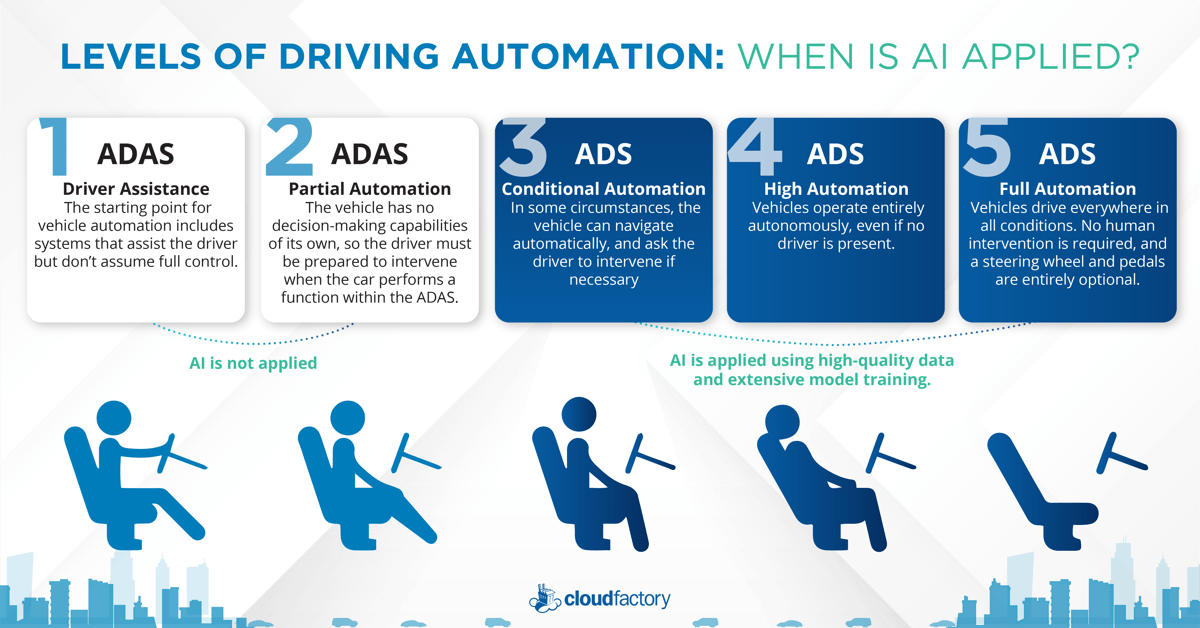

SAE’s Levels of Driving Automation, the global standard for defining driving automation, is applied to ADS and ADAS. Only Levels 3 to 5 require ADS powered by AI models trained using image, sensor, LiDAR, satellite, and video data. Here is a further breakdown:

Level 1 – The starting point for vehicle automation includes systems that assist the driver but don’t assume full control. These include ADAS such as parking sensors and lane centering, which are standard in vehicles with higher trim levels. And because the driver remains hands-on, AI is not at play; the system can’t make decisions by itself.

Level 2 – Level 2 vehicles provide a partial hands-free experience with ADAS automatic acceleration, steering, self-parking, and braking. Because the vehicle has no decision-making capabilities of its own, the driver must constantly monitor the operation and be prepared to intervene. AI technology isn’t involved in achieving this level. Instead, the driver is responsible for monitoring and reacting to their environment.

Level 3 – This is where AI and computer vision enter the picture. An ADS on the vehicle can perform all aspects of the driving task under some circumstances, such as driving in traffic with clearly marked lanes. This is the first level of true driving autonomy. The vehicle can drive itself thanks to the provision of an extensively trained AI model, which can navigate automatically, synchronize with traffic signals, and ask the driver to intervene if necessary.

In December 2021, Mercedes-Benz became the world's first automaker to gain internationally valid regulatory approval for producing vehicles capable of Level 3 autonomous or "conditionally automated" driving.

Mercedes will be incorporating its Drive Pilot system on S-Class and electric EQS models. With Drive Pilot, cars will operate autonomously during limited traffic situations. The cars will accelerate, steer, brake, and follow navigation commands when there are clear lane markers, dense traffic, and speeds below 38 mph.

Level 3 autonomous driving allows a driver to be completely hands-free while driving at low speeds in traffic.

Level 4 – An ADS on a Level 4 vehicle uses a highly advanced, adaptive AI model powered by active learning and extensive training. Level 4 vehicles operate entirely autonomously, even if no driver is present.

Cruise began testing its all-electric vehicles without a driver behind the wheel in San Francisco ahead of the planned commercial rollout in 2022. This is the first time Cruise is deploying a Level 4 robotaxi to operate on city streets without a driver behind the wheel - a development that could preview a future where Cruise-type vehicles replace Uber and Lyft drivers.

Cruise performed millions of miles of testing on their journey to Level 4 Driving Automation.

Level 5 – An ADS on a Level 5 vehicle means the vehicle can self-drive in all circumstances. The highest level of automation is much the same as Level 4, albeit with the ability to drive everywhere in all conditions that humans would normally drive. No human intervention is required, and the provision of a steering wheel and pedals are entirely optional. A Level 5 vehicle fully replaces the need for a human driver in every situation.

In December 2020, Zoox unveiled Level 5 robotaxis, which are currently being tested in San Francisco, Las Vegas, and Foster City, California. These taxis feature bi-directional capabilities and give a fantastical view into the next generation of intelligent transportation. Zoox robotaxis remain in the testing phase for now. They use multiple processors to analyze data from dozens of onboard sensors to help developers ensure safety through diversity and redundancy of systems and algorithms.

With ADS powered by AI technologies, vehicles can give us more freedom and safely take us where we need to go, with minimal fatalities and commute times and a smaller hit to the environment. To achieve this transformation at scale, vehicle and component manufacturers need massive amounts of complex training data to train AV algorithms, data that comes from high-quality LiDAR, sensor, image, and video annotation.

CloudFactory is driving the innovation of self-driving cars by labeling the enormous amounts of individual objects in images and videos to train machine learning and computer vision models to accurately interpret the world around them.

Learn more about our work with autonomous vehicles here.

Video Annotation Data Labeling Computer Vision AI & Machine Learning Automotive